Scale MCP with Dynamic Tool use

MCP servers were downloaded 18M times in December 2025, representing a 1200% annual increase, and 72% of surveyed technical professionals believe their usage of MCP will grow in the next 12 months.

The incentives for this growth in usage are clear: For users of MCPs, the more MCPs are connected to your client tools, the more powerful those tools become. For providers of MCP servers, wrapping core platform capabilities in MCP enables AI-driven distribution

However, common implementations of MCP have a flaw: when users prompt for an MCP tool use, most agents load every connected MCP tool definition into the context window. But if a client is connected to hundreds of MCP tools, more than 98% of the upfront tokens can go to waste.

A strong solution is dynamic tool use, significantly reducing the amount of tokens needed.

The MCP Problem

MCP is an open standard that lets AI clients (like Cursor) connect to MCP servers (like GitHub or Figma) without custom integration work. Every proprietary software system will soon have an MCP server wrapper so that AI clients can use it. Over time, there will be more MCP servers and more MCP clients being connected to them.

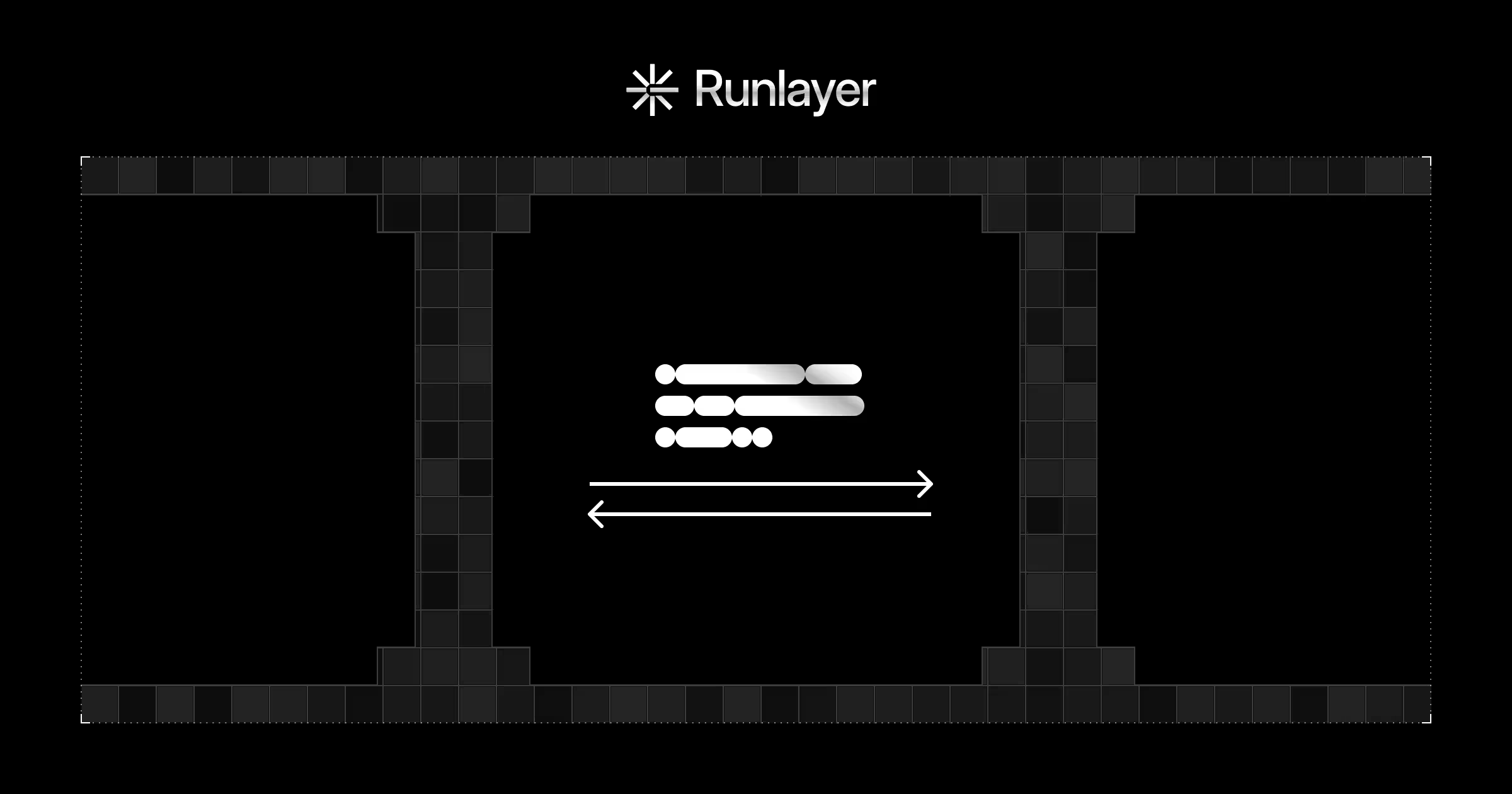

However, even when there are hundreds or thousands of MCP tools, all of the MCP tool definitions are loaded simultaneously into the context window so the agent can choose which tools they need.

We can make this concrete with an example: if a user connected their Cursor instance to the Figma MCP, GitHub MCP, and a dozen other MCP servers, and then delegates a task to Cursor, the agent harness will load all MCP server definitions into the context window.

That amounts to hundreds of possible tools for Figma, GitHub, etc., even though the task at hand might only need one (or none). Regardless of the task complexity, the user is incurring token costs for every single definition. Even worse, LLMs can grow confused due to the volume of MCP definitions relative to the initial prompt.

The solution: Dynamic Tool Use

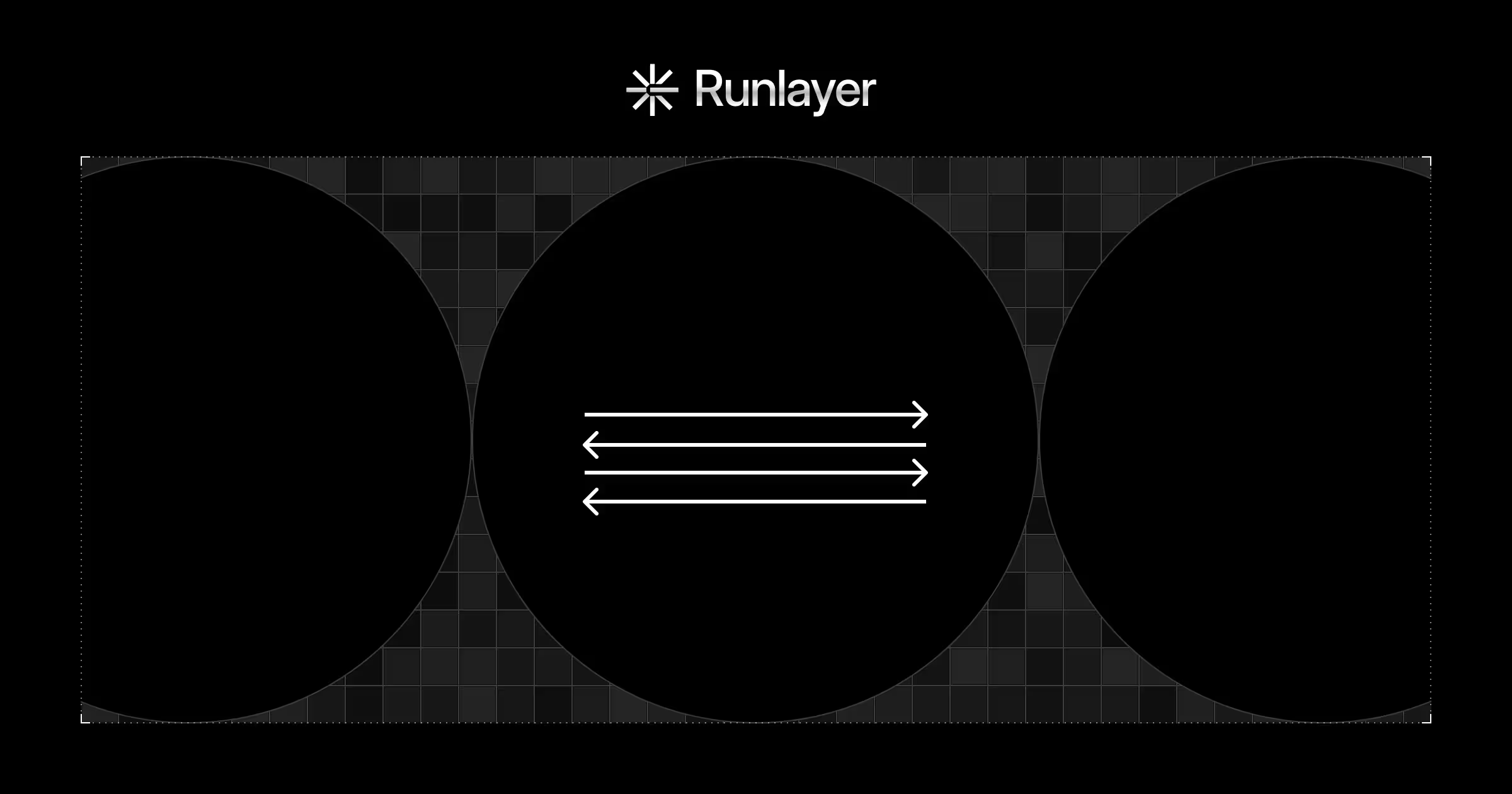

In the dynamic tool-use approach, we no longer load all MCP tool definitions into the model’s context window. Instead, no matter how many tools and MCPs are added, we only expose two tools: search_tool and execute_tool.

search_tool: The model uses this tool to look for other tools using a keyword-based search. Far fewer tool definitions are returned, saving token costs and keeping context rich.execute_tool: Once the model has found its desired tool,execute_toolruns it.

The difference is that we spend one extra agent turn processing the results from search_tool, instead of calling it directly from the context window.

Without Dynamic Tool Use:

- User sends prompts that require tool(s).

- All tool definitions (including MCPs) are loaded into the context window

- Model calls the desired tools

With Dynamic Tool Use:

- User makes a request

- Model searches for tools via keyword-based

search_tool - Far fewer tool definitions are loaded into the context window

- Model calls the desired tools

This works because we delegate search to computation rather than the context window. No longer is the LLM choosing the right tool amongst all possibilities; the search tool instead returns a tiny subset of all available tools, which can be far cheaper to process.

When to use dynamic tool use

Dynamic tool use saves tokens when the client is connected to many tools. However, it also adds latency because an extra agent turn is added to search for tools.

Choose dynamic tool use if your AI client needs access to hundreds or thousands of tools and latency isn’t critical. Don’t use dynamic tool use if latency is critical and token costs are not the bottleneck.

In most modern AI applications, latency is expected, token costs eat up the most margin, and MCPs often add new capabilities. Therefore, enabling dynamic tool use typically makes sense.

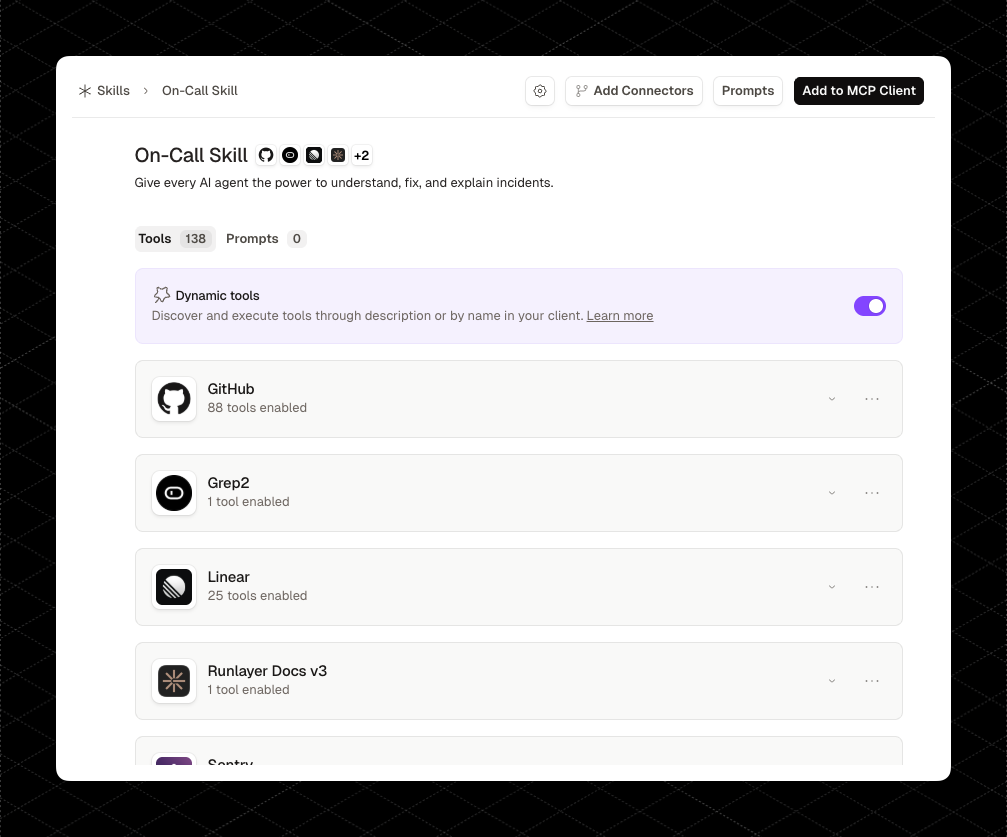

Putting dynamic tool use into practice with Agent Skills

Agent skills demonstrate the advantages of dynamic tool use.

Skills are markdown files with specific instructions that accomplish specific goals, such as "Create Standup Slide Deck." They're convenient for wrapping a concrete function into a directory.

Skills can reference as many tools as they need, including MCP, which makes dynamic tool use important.

Most tool definitions look like this, which is about 130 tokens:

{

"jsonrpc": "2.0",

"id": 1,

"result": {

"tools": [

{

"name": "get_weather",

"title": "Weather Information Provider",

"description": "Get current weather information for a location",

"inputSchema": {

"type": "object",

"properties": {

"location": {

"type": "string",

"description": "City name or zip code"

}

},

"required": ["location"]

}

}

],

"nextCursor": "next-page-cursor"

}

}

When a Skill references tools without dynamic tool use, the model must load all available tool definitions, at a cost of 130 tokens per tool each time the Skill runs.

With dynamic tool use, the model searches for only the tools it needs, saving a large number of tokens.

On Runlayer, teams can enable this with a single toggle. No custom implementation needed.

Conclusion

When we load all MCP tool definitions into an LLM, we are essentially asking the model to search for its desired tool, implicitly in its context window. With dynamic tool use, we allow the model to delegate that search to a simpler, deterministic algorithm, which is far cheaper to run.

The lesson: Intelligence is expensive, computation is cheap. Where possible, move problem-solving to computation.

Runlayer supports dynamic tool use for MCPs and custom skills. Book a demo.

.jpg)